There is no online account sign-up needed, no privacy policy to review, and no advertisements. It does not attempt to guess what you want to do, and keeps everything on your local machine. If you feel comfortable sending your voice commands through the Internet for someone else to process, or are not comfortable with Linux and the command line, I recommend taking a look at Mycroft. See Rhasspy if you are looking for a Snips replacement, and avoid investing time and effort in a platform you cannot control! Unfortunately, they were purchased by Sonos and have since shut down their online services (required to change your Snips assistants). Until relatively recently, Snips offered an impressive amount of functionality offline and was easy to interoperate with. It is possible to use Dragon in Wine on Linux or via a virtual machine, but is difficult to set up and not officially supported by Nuance.

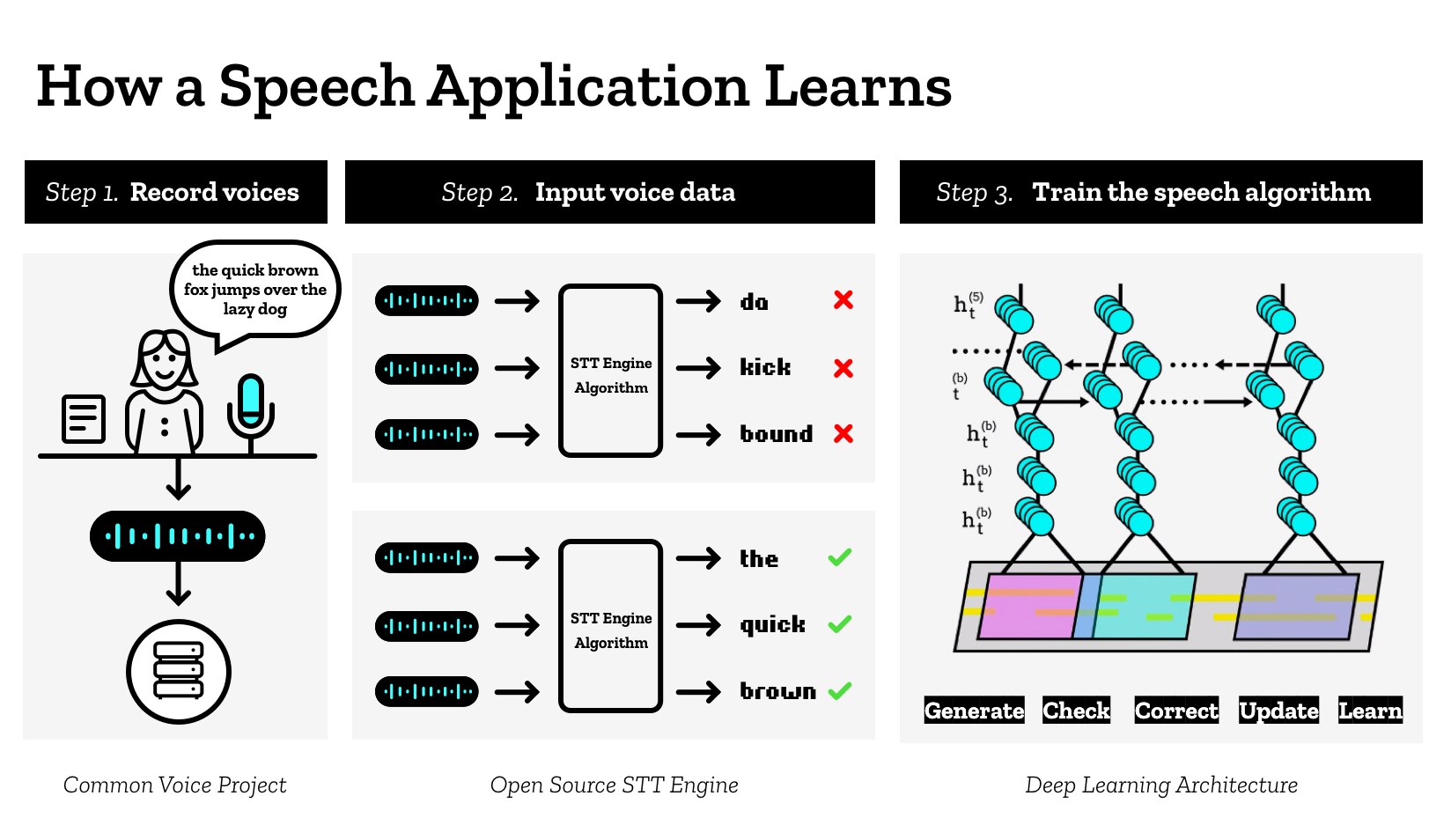

Great! Unfortunately, Dragon requires Microsoft Windows to function. Despite the high accuracy and deep integration with other services, this approach is too brittle and uncomfortable for me.ĭragon Naturally Speaking offers local installations and offline functionality. Additionally, they keep a copy of everything you say on their servers. Why not just use Google, Dragon, or something else?Ĭloud-based speech and intent recognition services, such as Google Assistant or Amazon’s Alexa, require a constant Internet connection to function. Intents and slot values are equally likely.A voice commands contains at most one intent.Speech can be segmented into voice commands by a wake word + silence, or via a push-to-talk mechanism.Voice2json is designed to work under the following assumptions: When trained, voice2json will transform audio data into JSON objects with the recognized intent and slots. Portions of (commands can be) as containing slot values that you want in the recognized JSON. This can be as simple as a listing of and sentences:Ī small templating language is available to describe sets of valid voice commands, with, (alternative | choices), and. Voice2json needs a description of the voice commands you want to be recognized in a file named sentences.ini. Audio input/output is file-based, so you can receive audio from any source. All of the available commands are designed to work well in Unix pipelines, typically consuming/emitting plaintext or newline-delimited JSON.This means you can change referenced slot values or add/remove intents on the fly. Re-training is fast enough to be done at runtime (usually By describing your voice commands with voice2json’s templating language, you get more than just transcriptions for free. Training produces both a speech and intent recognizer.Voice2json is more than just a wrapper around pocketsphinx, Kaldi, DeepSpeech, and Julius!

English very well, so please let me know if any profile is broken or could be improved! I’m mostly Chinese Room-ing it. I don’t speak or write any language besides U.S.

Voice2json supports the following languages/locales. Use the transcribe-wav and recognize-intent commands to do speech/intent recognition.Edit sentences.ini in your profile and add your custom voice commands.Your profile settings will be in $HOME/.local/share/voice2json//profile.yml.Run voice2json -p download-profile to download language-specific files.Supported speech to text systems include: Bootstrap more sophisticated speech/intent recognition systems.Provide basic voice assistant functionality completely offline on modest hardware.Add voice commands to existing applications or Unix-style workflows.Commands or intents that can vary at runtime.Commands with uncommon words or pronunciations.Sets of voice commands that are described well by a grammar.Tools like Node-RED can be easily integrated with voice2json through MQTT.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed